Emergency radiology reports play a critical role in guiding rapid clinical decision-making in high-stakes situations such as trauma and acute illness. Even minor errors in these reports can result in misdiagnosis or delayed treatment. Increasing workloads, time pressures, and shortages of radiologists further elevate the risk of reporting errors, including omissions, incorrect terminology, and laterality confusion.

Traditional quality assurance methods, such as double reading by senior radiologists, are often impractical in fast-paced emergency settings. Recent advancements in large language models (LLMs), including GPT-4 and Claude 3.5 Sonnet, have shown promise in automating error detection. However, their application in emergency radiology, particularly in non-English contexts, remains underexplored. DeepSeek-R1, a model optimized for Chinese clinical text, offers a potential solution to improve report accuracy and workflow efficiency.

The aim of this study was to evaluate the performance of DeepSeek-R1 to detect errors in real-world Chinese emergency radiology reports, compare accuracy and efficiency with other LLMs and radiologists of different experience levels, and assess potential integration in clinical workflows. It also addresses the gap in earlier research by focusing on emergency settings, incorporating real-world datasets, and evaluating performance in non-English clinical environments.

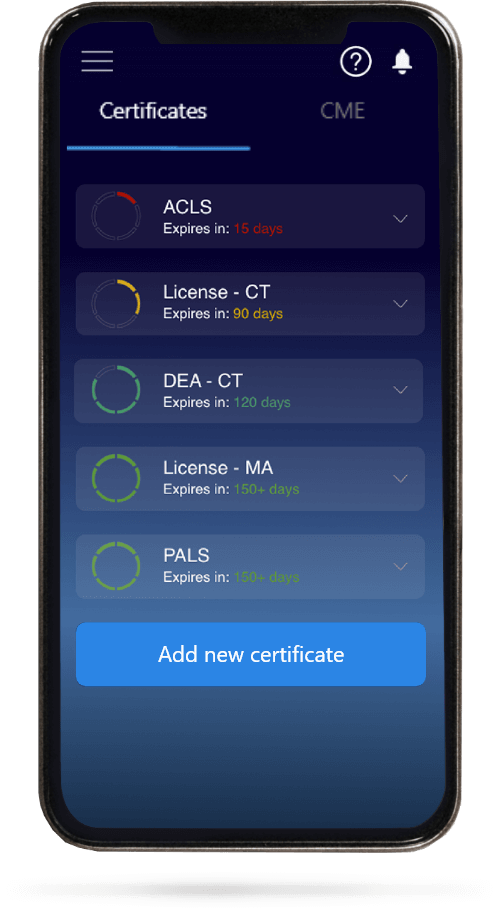

This retrospective study analyzed 7435 anonymized emergency radiology reports (CT, MRI, and radiography) collected from November 2024 to April 2025, supplemented by an external dataset of 100 reports from two additional hospitals. From the primary dataset, 200 error-free reports were selected and verified by experts, with 100 modified to include 127 controlled errors across categories like omission, insertion, spelling, and side confusion. These formed a balanced evaluation dataset. Five LLMs, including DeepSeek-R1, Grok3, and GPT-4o, were tested by using 0-shot and few-shot prompting strategies. The study was conducted in 4 stages: initial model screening, few-shot evaluation, comparison with 12 radiologists (senior, attending, and resident levels), and validation on 800 real-world reports.

Statistical analysis was performed by using R software with key performance metrics including true positive rate (TPR), positive predictive value (PPV), F1-score, and false-positive report rate (FPRR). Confidence intervals (CIs) were calculated by using the Wilson score method. Group comparisons were assessed by using Wald chi-square tests with Bonferroni correction.

In stage 1, DeepSeek-R1 achieved the highest detection rate (51.2%), outperforming Grok3 (48%) and other models like GPT-4o (~33%). Its PPV, TPR, and F1-score were 64.4%, 51.2%, and 57%, respectively, with a relatively low false-positive rate (~16.5%). In stages 2 and 3, performance improved significantly with few-shot prompting, with DeepSeek-R1’s detection rate increasing from 60.9 to 84.4% (P=0.003). It significantly outperforms Grok3 in this setting (P < 0.001). DeepSeek-R1 exceeded the performance of residents and achieved comparable results to more experienced clinicians as compared to radiologists. Although differences were not always statistically significant. Subgroup analyses showed strong performance to detect specific error types like laterality confusion (94% detection in 0-shot) and omission errors (up to 100% in few-shot).

No significant differences were observed in 0-shot settings across imaging modalities. In few-shot settings, DeepSeek-R1 outperformed lower-performing residents (90.3% vs 54.8% in radiography, P = 0.01). On an external dataset, it achieved a detection rate of 95%, demonstrating robustness across institutions. In real-world validation (800 reports), DeepSeek-R1 identified 117 true errors out of 207 flagged cases (positive predictive value [PPV] 56.5%), with omission errors being the most common type.

False positives did not involve fabricated findings but rather over-alerting, and about 19% were considered beneficial to improve clarity or completeness. Interrater agreement in radiologists varied widely (κ = 0.02 to 0.66), which highlights subjectivity in error detection. AI-human agreement was more consistent (κ ≈ 0.42 to 0.57). LLMs were significantly faster than radiologists. DeepSeek-R1 processed reports in about 92 seconds each as compared to 109 to 193 seconds for human readers, which shows clear time-saving potential.

DeepSeek-R1 represents a significant advancement in AI-assisted quality assurance for emergency radiology. It shows high sensitivity to detect clinically relevant errors and performs at a level comparable to or beyond less experienced radiologists. It offers sustained efficiency gains. Limitations like synthetic data use, a moderate false positive rate, and limited generalizability to other languages exist. The results support its role as a scalable proofreading assistant. With further validation and optimization, specifically in multilingual and real-world clinical settings, DeepSeek-R1 has strong potential to improve accuracy, reduce workload, and improve patient care in emergency radiology.

Reference: Shen H, Wu T, Wang F, et al. Error Detection in Emergency Radiology Reports Using a Large Language Model: Multistage Evaluation Study. J Med Internet Res. 2026;28:e86841. doi:10.2196/86841